Hyperscaling Responsibly: Data Center Design for Sustainability

Design techniques have given rise to effective strategies that aim to minimize operational cost, environmental impact and neighborhood disturbance.

The expansion in data center development across the country has drawn scrutiny from local communities over the perceived impact of operations on local resources. Operators are equally concerned, and many are adopting modern design techniques that marry precision efficiency with sustainable operations to satisfy their own and their neighbors’ priorities. Power and water are typically the biggest challenges.

In 2024, U.S. data centers used 180 Terawatt-hours (TWh) of electricity, enough to power 16 million homes for an entire year. That number is projected to more than double to 420 TWh by 2030. The demand for water used to cool AI servers is expected to reach 300 billion gallons in the same time frame, which is equivalent to almost a year’s worth of water consumption for New York City’s 9 million residents.

With data center construction accelerating, building sustainably has become essential and a primary driver in the design process. Recognizing resource limitations, developers and hyperscalers — major cloud service providers, including Amazon, Google and Microsoft — have vested interests in maximizing resource efficiency and green energy adoption. That means reliability, durability, resource stewardship and community acceptance have become primary factors in the design and build process.

Current design techniques have given rise to effective strategies developers can use to minimize operational cost, environmental impact and neighborhood disturbance. Leading-edge developers, architects and engineering teams are addressing environmental impact of data center development and operations through sustainable, integrated design.

Solving the power problem

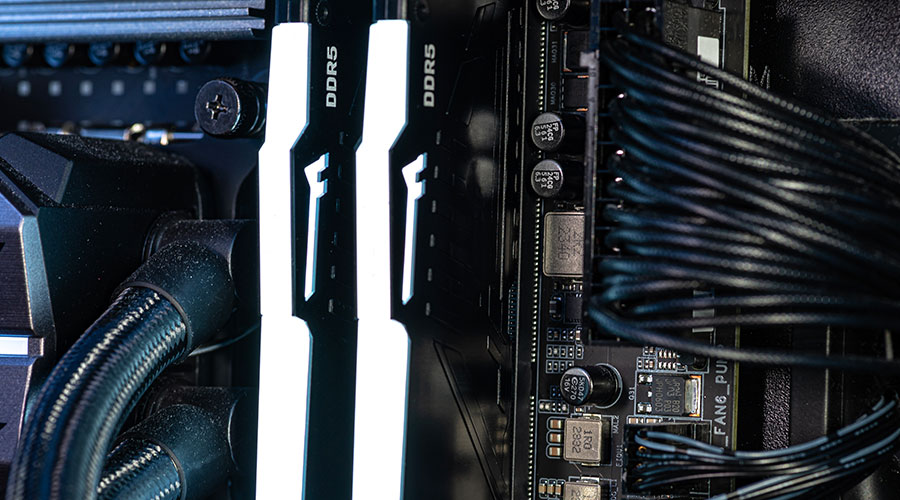

While data centers consume a large amount of power, they are extremely efficient. About 80 percent of the energy used by data centers powers the servers themselves, and equipment suppliers have responded by developing the most efficient chips and servers.

The average annual power usage effectiveness (PUE) — the ratio of total annual energy to run the data center relative to the total annual draw by the IT equipment — has dropped significantly. With 1.0 PUE being a 100 percent efficient system, the average is now 1.56, which is down from 2.50 at its peak. Beyond that, the design priority then shifts to minimizing non-compute power consumption across the facility.

In the pre-design phase, many utilities and local authorities require upfront municipal bonds to fund generation and transmission upgrades that serve the new data center load and provide much-needed grid modernization to accommodate increased electrical demand and the green energy transition. The aging grid has been a vulnerability long before the data center boom, so this approach serves the interests of data center operators and their neighbors.

Many hyperscalers also are tapping into renewable energy, especially solar, hydropower and geothermal. For example, Google, Meta and others have recently partnered with geothermal suppliers to underwrite the construction of new generation capacity to supply their data centers. This measure allows emerging technologies to reach commercial scale while delivering the always-on power that AI workloads require. These partnerships provide a guaranteed private-sector market for new renewable energy technology and are key to supporting the innovations that will eventually enrich all our lives.

The Bitcoin mining sector has been an early adopter of grid-responsive operations — a strategy colocation and hyperscale operators can also use to manage demand. By participating in utility demand-response programs, operators can modulate their consumption based on real-time demand to leverage financial incentives and avoid overtaxing the grid.

Because redundant power is essential for ensuring high reliability, sites commonly use backup generators, battery storage or a combination of both. Designing for ample on-site capacity allows operators to take themselves off the grid and become self-sustaining during periods of high demand or use battery storage to bank power when demand is low. Instead of being merely a consumer, operators become grid participants as part of a virtual power plant, absorbing or releasing power as demand dictates.

Managing water woes

Equipment cooling is essential for optimal performance of data centers, and the water required often is a major sticking point in facility approval and community pushback. While some operators have tried to mitigate this issue by opting for waterless, air-cooled systems, this approach leads to added electricity demands.

Water has a higher heat capacity, and liquid-cooled servers can operate at higher temperatures, so they are inherently more efficient than their air-cooled predecessors. Combined with the higher operating temperatures made possible by innovations from hardware providers, facility designers can reduce or eliminate the most power-hungry cooling systems through liquid cooled loads.

Another design option is to capture heat output for use in other applications. This practice is common in the European Union, where district heating loops provide hot water heat to nearby neighborhoods. Because residential proximity is less common in the United States, many developers are exploring industrial applications for their excess heat, including food processing and manufacturing facilities that can be co-located near the data center. Greenhouses and other agricultural use cases are also viable options.

Julia Diaz, P.E., is a mechanical engineer for the mission critical sector at HED, an architecture, engineering and design firm.

Related Topics: