How to Keep Legacy Data Centers Productive

Given the rapid pace of change in the data centers world, FMs must work closely with IT to make sure the data center is still meeting organizational needs.

Despite the growing use of cloud and colocation strategies, legacy enterprise data centers still handle a vast amount of data processing and storage. These existing data centers range in age from almost new to decades old. But even newer data centers find it necessary to evolve and adapt to changing IT infrastructures.

A range of forces is driving change in existing data centers. One is the increasing use of hybrid IT arrangements, rather than limiting information processing and storage to a single environment. Today, many organizations are using cloud, edge, co-location, and managed hosting along with on-premises data centers. And more change is on the horizon. “By 2025, the number of micro data centers will quadruple due to technological advances such as 5G, new batteries, hyperconverged infrastructure, and various software-defined systems,” says Henrique Cecci, an analyst with Gartner, in “The Future of Enterprise Data Centers – What’s Next?”

Hybrid solutions typically mean that the legacy data center’s mechanical and electrical systems are being underused. The opposite problem arises when more computational capability is added within existing space to keep up with changing IT needs. That approach requires careful engineering to prevent overtaxing the data center’s existing electrical and mechanical systems.

“By 2025, enterprise data centers will have five times more computational capacity per physical area (square foot) than today,” says Cecci.

Other developments are bringing changes to the demands placed on legacy data centers. Cecci also cites advances in power, cooling, telecommunications, and artificial intelligence (AI), along with hardware and software advances, as transforming enterprise data centers.

“Traditional on-premises data center models must evolve to play a role in modern enterprise information management,” says Cecci.

Given all the changes happening in the data world, it’s crucial that facility managers and the IT department work closely together, so that both are meeting the organization’s current mission statement for data management.

Increasing capacity

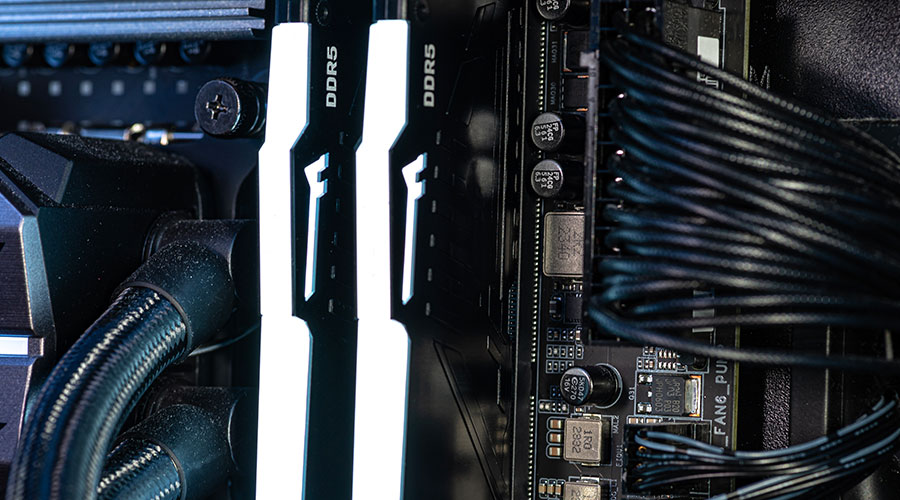

Today’s servers are miniaturized versions of earlier models. They run at extremely high speeds and generate far more heat with small footprints. But just because the space can hold the newer models does not mean that the legacy data center space is designed to handle them.

“Many legacy data centers were designed around a set of power and cooling density standards that have dramatically changed over the past 10 years,” explains Steve Smith, director of physical IT network for Arvest Bank Operations.

Virtual systems reduce servers into compact, dense machines with internal redundancy. “While this reduces sprawl, it has increased watt density and cooling requirements into smaller, often containerized pre-stack conditions,” says Smith. Although the requirement for total cooling and power may actually have decreased, it now must be effectively delivered in a smaller footprint.

Smith says that getting the power to the smaller area is not complicated, but it can be challenging to concentrate cooling volume to match fan capabilities with British thermal unit (BTU) capacity, as the initial design may have relied on a spread out cooling design.

“Static pressure management and controlling the cool air delivery temperature become a focus in these spaces,” says Smith, “so concentrated heat loads can receive concentrated cooling capacity without unintentionally overcooling the entire space.”

Related Topics: