Data Center Availability, Reliability Hinge On Numerous Factors

In the data center industry the terms "reliability" and "availability" are often used interchangeably to describe expected levels of performance. Though data center reliability and availability are related, they describe distinctly different characteristics of performance.

In science, reliability is linked with repeatability. If the same experiment is done over and over with the same results, then it has a high degree of reliability. Two common means of measuring reliability are:

- Mean time between failure (MTBF), which is the total time in service divided by the number of failures.

- Failure rate, which is the number of failures divided by the total time in service.

The term "reliability" in a technical sense is often coupled with "validity." Validity is how accurate or true a measurement is to actual. If you step on a scale 10 times, and get the same result each time, the scale is reliable. But if the measured weight is incorrect, it is not valid.

Availability is a measure of how often something is in an operable state. Simply put, availability is uptime divided by total time measured. Generally speaking, something can be available but unreliable, and can be reliable but not valid. A computer room air conditioner may be running for years (high availability) but not doing a very good job of maintaining stable room conditions (low reliability). And if the controlling thermostat is out of calibration, the measured performance is not valid.

So how does one measure the reliability of a data center? The answer depends on what the overall goals and expectations are for the facility's operations. A reliable data center can be trusted to provide continuous operations as long as it is operated properly and within the overarching design intent and limitations. Some high performance computing (supercomputer) facilities do not require 100 percent uptime. They can schedule full outages between "runs." They may be built with Tier 1 or Tier 2 infrastructure topologies because they do not need to be concurrently maintainable. Their overall availability may be lower than Tier 3 and Tier 4 facilities, but if their failure rate during operation is very low, they are dependable and considered to have high reliability.

But the goal of most data centers is sustained continuous operation of the IT equipment. In these cases, the goal is to deliver 100 percent computer room availability. To achieve 100 percent availability, both reliability and validity are needed. The operating processes that keep the data center running must be repeatable in that they consistently result in the expected outcome, and that outcome must correspond to the desired result.

Two kinds of factors affect the reliability and availability of a data center: physical infrastructure and operating staff.

Physical Infrastructure

In general, the critical facilities industry does an exceptional job at delivering high quality, high performance infrastructure. As the industry evolved, redundancy schemes progressed from "N," to "N+1," to "2N," to "2(N+1)" topologies (where "N" is the minimum number of pieces of equipment required to meet the demand of a given system). Engineers and designers have learned the lessons afforded by time and experience to apply these strategies down to each critical system and sub-system, including the associated controls and interfaces between systems. Designs can now be certified as simultaneously "concurrently maintainable" and "fault-tolerant." These designs have not only eliminated single points of failure, but remain fault-tolerant even when equipment and systems have been isolated for maintenance and repairs.

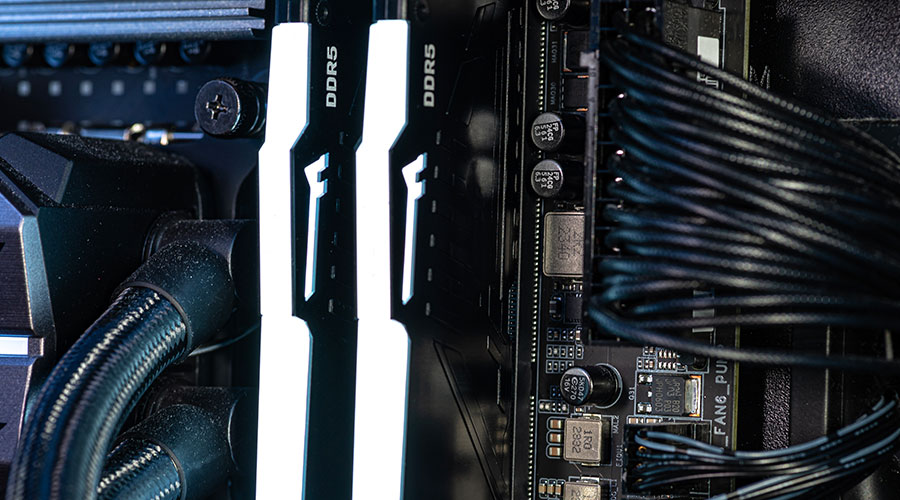

The downside is that these designs have introduced incredible complexities and complicated switching procedures and sequences of operations. As such, the reliance on computers to actively monitor the health and status of equipment and system performance and to take automatic action when required has been greatly increased. The good news is that computers are some of the most reliable "machines" ever made. They can monitor almost continuously (limited by baud rate, polling time, scan rates, etc.) and can be relied upon to execute their programmed logic flawlessly over and over again.

Common Sense Principles Improve Data Center Operations

Keeping these common sense principles in mind can help improve availability and reliability in a data center.

- Simplicity is more reliable than complexity.

- Computers are more reliable than people.

- Equipment performance degrades over time and use.

- Higher quality equipment has better availability and reliability than poor quality equipment.

- The accuracy of un-calibrated sensors and transducers decreases over time.

- Starting and stopping equipment imparts more stress than when equipment is in stable operation.

— Terry L. Rodgers |

Related Topics: