SPONSORED

Schneider Electric - Branded Feature

Digital Remote Monitoring and How it Changes Data Center Operations

Executive summary

Today’s data center power and cooling

infrastructure has roughly 3 times more

data points / notifications than it did 10

years ago. Traditional data center remote

monitoring services have been available

for over 10 years but were not designed to

support this amount of data monitoring and

the associated alarms, let alone extract

value from the data. This paper explains

how seven trends are defining monitoring

service requirements and how this will lead

to improvements in data center operations

and maintenance.

Introduction

Data center digital remote monitoring services1 have been around for over 10 years

but older offline traditional services are limited compared to new digital2 services

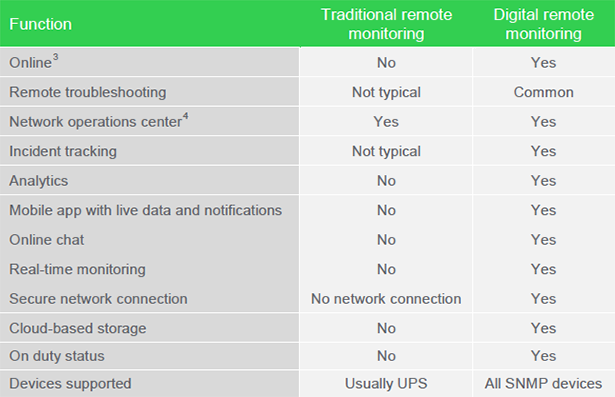

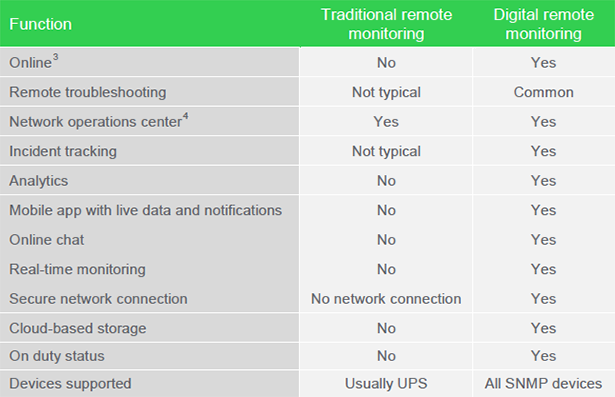

available today (see Table 1 for comparison). These new services incorporate

technology such as cloud computing, analytics, and mobile apps.

Inside a data center today, a manager has no idea when they should replace a

component in their UPS or cooling unit that is about to fail. In contrast, outside the

data center, a driver gets an instant notification on their smart phone that their

normal route is backed up 20 minutes with a recommended alternate route. This

disparity has prompted us to look at how advancements and trends in IT are changing

data center monitoring and, in turn, how digital remote monitoring will change

data center operations and maintenance.

The general concept of monitoring today is widely understood and anyone with a

fitness tracker, continuous glucose monitor, or a learning thermostat has had

firsthand experience in how advances in IT have improved their lives. In particular,

users benefit from immediate knowledge from their devices (e.g. calories burned,

blood sugar level, etc.). However, most data centers today are not benefiting from

big data analytics and machine learning. These and five other trends are poised

to revolutionize how managers operate and maintain data centers.

This paper explains seven trends that are defining next-generation data center

monitoring and its benefits. We describe the requirements to attain these benefits,

and describe how data center operations and maintenance will evolve in the future.

Table 1 — Comparison between traditional and digital remote monitoring

Difference between traditional and digital

A key differentiator between these two types of remote monitoring comes down to the definition of online3 – “connected

to a computer, a computer network, or the Internet”

Traditional remote monitoring is not an online service therefore it cannot provide real-time monitoring. Instead it relies on intermittent status updates (usually via email).

Digital remote monitoring is online and connected to a data center (usually through a gateway) which allows for realtime monitoring. In addition it uses IT services such as cloud storage and data analytics.

Trends Influencing Monitoring

Monitoring services available 10 years ago were desktop-based, limited in data

output, and largely reactionary (i.e. depended on humans to interpret what was

wrong). Digital remote monitoring has resolved these limitations through technology,

and over the next few years more limitations will be addressed by technology.

We see seven technology trends that are influencing data center monitoring.

- Embedded system performance and cost improvements

- Cyber security

- Cloud computing

- Big data analytics

- Mobile computing

- Machine learning

- Automation for labor efficiency

We briefly describe these trends in this section and, in the next section, we describe

the digital remote monitoring requirements needed to comprehend, mitigate, or take

advantage of these trends.

Embedded system performance and cost improvements

Embedded systems are found in nearly all data center devices including cooling

units, PDUs, UPS, chillers, etc. and basically control the operation of these devices.

Without the outputs from these embedded systems, there would be nothing to

monitor. Embedded systems have improved significantly over the years in terms of

computing capability, data storage, communications, and pricing. This means data

center devices today can provide much more data today than they could 10 years

ago. We estimate that the total number of alarms and notifications available from

power and cooling devices have increased over 300% over the last ten years. This

increase comes from a combination of more sensors, more features, more algorithms,

and higher sampling rates. The more data available, the more digital remote

monitoring can infer helpful information from data center devices, as we describe

later in the paper.

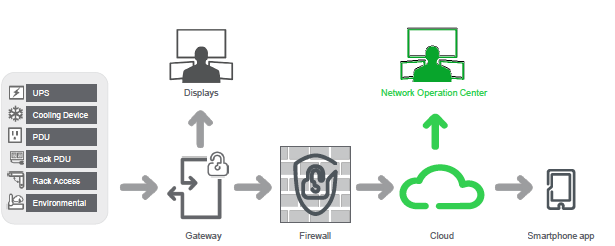

Cyber security

Cyber security is one of the biggest concerns5 among data center managers around

the world. Not only are they concerned about IT equipment vulnerability, but also

physical infrastructure equipment that has been exploited as “backdoors” into the IT

network. Digital remote monitoring, as well as other cloud-based services, must

comprehend cyber risks even before the product or service is created. Digital

service providers need to demonstrate their secure development lifecycle (SDL)

practices and policies. Ask for their SDL policy, and validate that the lifecycle

includes phases that focus on training, security requirements, design, development

(e.g. coding standards), verification, release, deployment, and response. In terms or

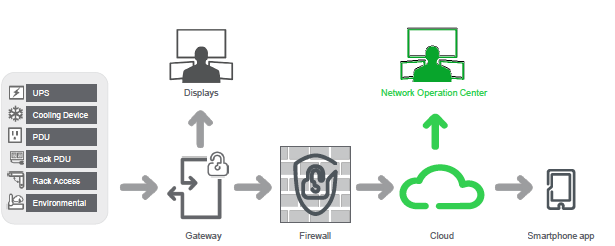

architecture, there should be a single point of entry into your network using a

gateway (usually software), and all devices communicate with the gateway. Figure

1 illustrates a recommended digital remote monitoring architecture.

There are several other factors that data center managers and security stakeholders

must consider when evaluating a vendor and their digital remote monitoring service,

therefore we discuss this topic further in White Paper 239, Addressing Cyber

Security Concerns of Data Center Remote Monitoring Platforms.

Figure 1 — Recommended digital

monitoring architecture

Cloud computing

Cloud computing is a highly scalable method of storing data and processing that

data. Cloud computing is what enables digital remote monitoring services. IT

services such as predictive analytics and machine learning can run on a cloud

computing platform to further increase the value of data center monitoring.

Big data analytics

Big data analytics may seem far from the mainstream but it applies to activities

performed today such as condition based maintenance (also referred to as predictive

maintenance) for plane engines and predicting how many products manufacturers

make for the holidays. A spreadsheet or database can only go so far to identify

patterns in data. Big data analytics is required when6:

- data volumes increase (e.g. petabytes of data)

- data becomes unstructured (i.e. data variety like emails, free-form text fields,

or trouble tickets)

- data is processed in real-time (this is known as velocity)

Mobile computing

Global use of mobile phones to access the internet has grown year over year for the

last several years while access through desktops has decreased year over year7.

This trend applies also to data center managers who are increasingly asked to do

more with fewer resources. Mobile computing helps alleviate this burden by allowing

managers to float between locations without being disconnected from daily

operations.

Machine learning

Machine learning is related to data analytics in that it uses data to make predictions but it’s different in that it improves the model by using results from previous learning8.

Machine learning can be used to drive an autonomous vehicle, recognize speech, recognize images, chose a Netflix movie, or accurately model the PUE of a very complex Goggle data center. In all of these examples, the driving, the recognition, etc. improves over time.

Automation for labor efficiency

Automation for labor efficiency is not a “hot” trend but it’s particularly relevant to data

center managers in an increasingly competitive business environment where they

are being asked to do more with less. This is where automation through digital

remote monitoring can help.

Digital monitoring benefits

The first trend in the previous section (Embedded system performance and cost

improvements), creates an overarching challenge for data centers. The amount of

data to track is increasing, rapidly making it harder for data center managers to

interpret what it means and take the right actions. This is unsustainable, especially

when you operate a data center that’s already understaffed. Some other challenges

managers face include:

- A multitude of alarms from the same device when one alarm notification would

have sufficed. This can actually cause alarm fatigue where the same repeated

alarm will eventually be ignored due to human nature9.

- Each power and cooling device tends to have its own native management

solution. This lack of a unified monitoring platform and standard architecture

adds to operational complexity. A detriment to an understaffed data center.

- Calling customer support for help, dialing through a list of menus, waiting,

getting someone who creates a trouble ticket but will likely have to escalate to

resolve the problem.

A digital remote monitoring service that comprehends, mitigates, or takes advantage

of the trends discussed above, can overcome these challenges and provide the

following benefits. Digital remote monitoring requirements are provided for each

benefit.

- Reduced downtime / lower mean time to repair

- Reduced operations overhead

- Lowered cost of maintenance and services

- Improved energy efficiency

- Scalability

Reduced downtime / lower mean time to repair

A review of downtime events typically reveals a series of state changes that collectively

lead to downtime. In other words, a single failure event normally does not result in downtime. The whole point of monitoring data centers is to reduce the risk of downtime by identifying and addressing a state change before others occur. In this context, digital remote monitoring services should meet the following requirements.

- Network operations center experts troubleshooting data center incidents

should be screened and trained on cyber security. The more years of experience

in offering digital remote monitoring, the more likely that an alarm, notification,

or failure is resolved without causing downtime or making the problem

worse. Experience in this case means that experts have learned through “near

misses” over the course of their careers. Research in aviation and

healthcare10 has shown that “near misses” are key to learning. Understanding

and documenting why these incidents occurred reduce the risk of future errors.

- Documenting all incidents must be part of any digital remote monitoring system.

- The service should reduce break-fix resolution time through alarming, remote

troubleshooting, and visibility into device lifecycle. This troubleshooting should

be delivered by experts monitoring your data center 7x24.

- Experts monitoring your data center should have a list of data center contacts

to call in the event of a critical event. Data center managers should be able to

update this list at any time, ideally through a mobile app.

- Compatibility with third-party devices in a data center improves the situational

awareness of domain experts in the NOC. Knowing the status of all devices

improves the chances of solving or at least understanding a problem or potential

problem.

- Predictive analytics and remote troubleshooting should be used to reduce the

number of times you need a service person working on your equipment. It’s all

too common to hear about technicians showing up multiple times either because

they needed help, didn’t have the right expertise, or didn’t have the right

part. By understanding the problem fully, field service engineers can come

prepared with the correct parts and tools thereby increasing the likelihood that

something is repaired on the first visit.

Reduced operations overhead

The following are requirements that allow a digital remote monitoring service to

reduce operations overhead, leaving staff to focus on more important proactive

tasks that add value to the business.

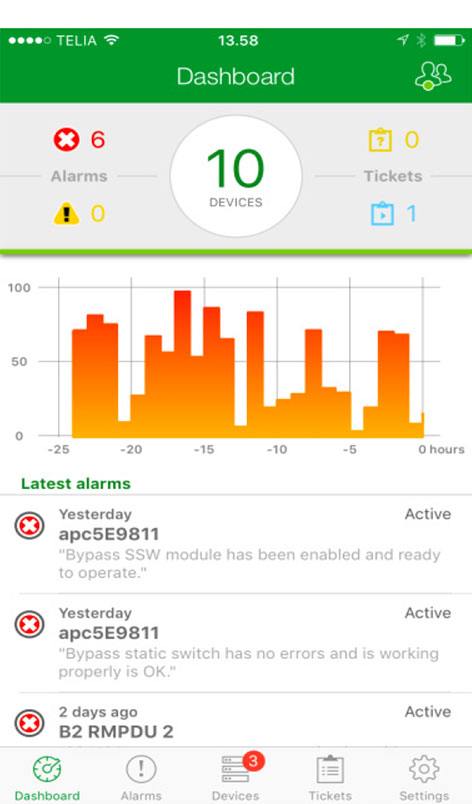

- Network operations center (Figure 2) staffed with the domain experts that

support your data center(s).

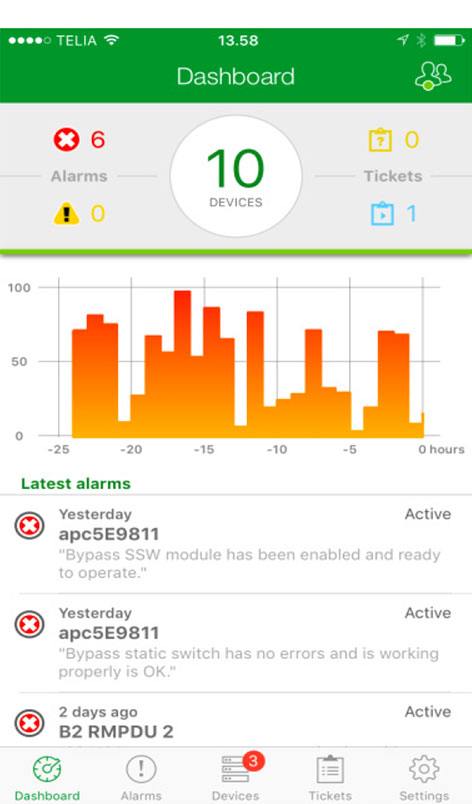

- A mobile app (Figure 3) allows data center managers and administrators immediate

access to data and the status of their data center from anywhere at

any time (not to mention peace of mind). Most people carry their phone therefore

it’s logical that it be the primary means of receiving information related to

the health of your data center. Logging into a desktop (sometimes requiring

VPN) to troubleshoot a problem is time consuming and inconvenient.

- Automatic trouble ticket generation should be provided through a mobile app.

This can save a significant amount of time as it avoids tech support phone

menus and explaining the same issue to multiple representatives. This aids

significantly in reducing time to resolution. A related best practice is to track

incidence via chats, messages, etc.

Figure 2 — Example of a network

operations center (NOC)

Figure 3 — Example of a digital

monitoring mobile app

- Online chat via mobile app as a means to collaborate with the team as well as

to gain instant access to domain experts in the NOC

- Fast on-boarding means that in about 30 minutes you can install the gateway,

auto discover devices, register the software, configure the smart phone app,

and begin monitoring your data center.

- Manually entering devices to be monitored is time consuming and allows for

human error. A digital remote monitoring system should auto-detect critical infrastructure

devices using simple network management protocol (SNMP).

Modbus TCP devices are not typically auto-detected because they need a device

definition file (DDF). Gateways typically scan a range of IP addresses

(user-specified), detect applicable devices, and present the data to the user.

- Event processing is similar to how hospitals triage patients. The most critical

alarms are prioritized in terms of notifications and actions. This practice reduces

the burden on the data center operators knowing that the NOC experts

will notify and guide them during an event that triggers multiple alarms.

- Event correlation and root cause analysis evaluates multiple alarms and deduces

possible causes and proposes possible solutions. This correlation process

can be done by domain experts in a NOC or a combination of machine

learning and experts. For example, one CRAH high temperature alarm may not be an issue, but six alarms on the same chilled water loop is likely a problem with the root cause being a closed supply water valve.

- Alarm consolidation converts multiple alarms from the same device into a

single incident. This practice avoids wasted time having to acknowledge multiple

identical alarms. Furthermore, a workflow ticket should be automatically

generated for this incident, to inform you of who is currently working on the issue,

what’s been done so far, and to track its progress and eventual resolution.

- Contextual alarms provide the user with useful information like its origin (e.g.

data center X, data hall Y, rack 15C), who’s involved, number of alarms generated,

and what they should check. All this information should be communicated

via mobile app without requiring a phone call.

- Anyone who has searched the web for an error message in hope of solving a

problem has likely come across an online community where hundreds of users

post both questions and answers to common problems. This form of “crowd

sourcing” can save a significant amount of time in solving problems. All digital

remote monitoring services should include their own online community.

Improved energy efficiency

The more devices being monitored, the better the opportunity to improve the data

center efficiency. However, to make a useful inference about the data center

efficiency, the UPS load (at a minimum) must be measured as a proxy for the total

IT load. Without knowing the IT load there is no basis upon which to assess

an increase or decrease in power and cooling infrastructure. For example, if

chiller energy is trending upward, I won’t know if it’s due to a chiller problem or due

to an increasing IT load. With this data, one can compare the power consumption of

all the devices in the power and cooling paths and look for anomalies compared to

the IT load. However, a more effective method for improving data center efficiency

is to measure PUE and compare it to a PUE model in real time.

White Paper 154, Electrical Efficiency Measurement for Data Centers discusses how

an energy efficiency model works and describes a system for continuous measurement

while assessing the PUE against the model. When properly implemented,

electrical efficiency trends can be reported, and alerts generated based on out-ofbounds

conditions. Furthermore, an effective system can provide the ability to

diagnose the sources of inefficiency and suggest corrective action. This modelbased

efficiency solution should also be continuously monitored by NOC personnel.

Scalability

Scalability is the ability for the digital remote monitoring system to accept additional

devices, or nodes, to monitor. Depending on how these systems are designed,

monitoring may be limited to a few thousand devices. Scalability isn’t typically a

problem for smaller data centers (e.g. 500kW IT load capacity) but is a serious

problem for larger data centers. Some data centers can have hundreds of thousands

of devices to monitor and require polling every few seconds, therefore, a

digital remote monitoring system should be designed using a horizontally-scalable,

cloud-based architecture. This means that as more devices are monitored, the

cloud service automatically adds more compute nodes to handle the monitoring.

Data center managers need to identify their requirements and then understand the

capabilities and limitations across the various monitoring services being evaluated.

The evolution of data center operations and maintenance

Use of embedded sensors in clothing, in watches, and in other “wearables” will allow

doctors to predict when you’re getting sick or when you are at risk of a heart attack,

and numerous other insights. By analyzing fuel consumption data, an airline can

adjust its flight procedures like the position of its control surfaces to improve fuel

efficiency11. These are examples of the “Internet of Things” (IoT), where devices

communicate with each other, through a gateway, micro data center, and or a cloud

data center, ultimately adding value to our lives and our businesses.

With this backdrop, it’s easier to see how data centers are fertile ground for improvements,

made possible through the trends described in this paper and IoT in

general. We see the following evolutions in operations and maintenance occurring

over the coming years inside small and large data centers alike.

Evolution in operations

- Just like autonomous cars are believed to experience less car accidents due to

human error, so too will data centers experience less downtime due to human

error. Reduction in downtime will be accomplished primarily through machine

learning. As more data is collected on causes of downtime or near misses,

digital remote monitoring systems will be able to predict when a data center is

at risk of a downtime event occurring and provide data center operators appropriate

steps to avoid it.

- Data center efficiency will improve in two ways; more accurate device efficiency

models and data center models. This accuracy will come as a result of data

gathered from actual operation in different data centers, in different climates

under different loads. The data center model, using machine learning, will

eventually have enough data that it can suggest what cooling system settings

will result in the lowest power consumption. As mentioned in the “Improved

energy efficiency” subsection above, the data center model is also used to

compare the predicted data center energy consumption with the actual consumption

and alert data center operators when they deviate.

- When a data center manager receives a data center alarm, their mobile app

will be able to tell them what steps they need to take to correct whatever is

wrong. More complicated procedures may be done with augmented reality

technology where the person wears a pair of special glasses and images appear

instructing them on exactly what to do.

- Weather data (and perhaps electric utility data) will be used to suggest when a

data center should switch to generator in anticipation of a power outage.

Evolutions in maintenance

- Traditional maintenance models charge customers for scheduled visits because

manufacturers lack data and analytics to accurately predict when something

will break or is running inefficiently. Data centers will move from calendar-

based maintenance to condition based maintenance. This will also encourage

device manufactures to use more sensors and algorithms that improve

component failure prediction, improve contextual alarms, and ultimately

reduce data center maintenance costs.

- Manufactures won’t need to rely on warrantee cards and phone calls to track

component failures. Instead, they will rely on a data lake and analytics that will

provide them with rich insights, not only on component failures in the field, but

how to improve the reliability of future products. The most compelling and valuable

part of this evolution for data center managers is the speed at which this will occur. Today it takes much too long for manufacturers to gather enough data, to recognize a problem, then to understand what’s causing it, and finally

to find a way to fix it.

- The insights from field data and analytics will make field service visits more

predictable. For example, there will be an increased likelihood that something

is repaired on the first visit and lower risk of service defects (either during or

after service is complete). Ultimately this translates into higher data center reliability

and lower maintenance costs for data center managers.

- Everything that field service technicians do will be logged and correlated with

what has happened. By collecting enough of this data manufacturers will know

that when they have a series of particular events, happen in a particular order,

that it means a given action and or parts are required. This will evolve into a

digital remote monitoring service automatically dispatching a field service

technician with the correct work order and spare parts.

- Traditionally you need at least two people to perform maintenance actions like

running a generator test; one person reading the instructions and validating

that they are performed correctly, a second one repeating the instructions and

performing the action. With machine learning we may only need one person.

The value of the network

The term “network effect” gained widespread awareness during the rise of Facebook as a leading social network platform. The term basically means that as more people

use a particular product or service, the more value users of that product or service

will realize. The telephone is an often-used example of the network effect. If only

one person in the world had a phone, there would be no value in it because they

couldn’t talk to anyone else. But when millions of people have and use one, it

becomes valuable. This is true of digital remote monitoring services.

If only one data center manager used a digital remote monitoring service like the one described in this paper, they wouldn’t get the value of data analytics and condition based maintenance. That value is attained very quickly as more data

centers use the service and the collective data is analyzed to provide insights. For

example, if 100,000 data centers used the service, a large percentage of these data

centers are likely to have an air-cooled packaged chiller cooling architecture. With

this amount of data, analytics could suggest changes to their cooling system and the

estimated savings these changes will have on the energy bill.

Conclusion

Data centers are on a path to become more reliable and efficient through the use

digital remote monitoring and condition based maintenance made possible through

technologies like big data and machine learning. However, this can only happen

with platforms that take advantage of the data constantly generated by the physical

infrastructure in a data center. Data center operators should review the digital

remote monitoring requirements provided in this paper as they begin to assess their

own data center evolution.

1 APC traditional remote monitoring service have been available since 2000

2 http://esmarchitecture.com/key-concepts/business-it-digital-services.html

3 http://www.merriam-webster.com/dictionary/online

4 Network operations center (NOC) is also referred to as a Service Bureau. It is the centralized function

responsible for monitoring data centers.

5 2 of the Top 10 Global (technology) Risks 2015 include: Data fraud/theft and cyber attacks, cyber

attacks among most likely high-impact risks (World Economic Forum, Global Risks 2015)

6 https://en.wikipedia.org/wiki/Big_data

7 http://gs.statcounter.com/#desktop+mobile+tablet-comparison-ww-yearly-2010-2016

8 https://www.quora.com/What-is-the-difference-between-Data-Analytics-Data-Analysis-Data-Mining-Data-Science-Machine-Learning-and-Big-Data-1

9 https://medicineforreal.wordpress.com/2013/12/23/hear-no-evil/

10 R. P. Mahajan, Critical incident reporting and learning, p. 69

11 Porter M., Heppelmann J., How Smart, Connected Products Are Transforming Competition, 2014, pg 4

About the Author

Victor Avelar is the Director and Senior Research Analyst at Schneider Electric’s Data Center

Science Center. He is responsible for data center design and operations research, and consults

with clients on risk assessment and design practices to optimize the availability and efficiency of

their data center environments. Victor holds a bachelor’s degree in mechanical engineering from

Rensselaer Polytechnic Institute and an MBA from Babson College. He is a member of AFCOM.

Resources

Addressing Cyber Security Concerns of Data Center Remote Monitoring Platforms

White Paper 239

Electrical Efficiency Measurement for Data Centers

White Paper 154

Power and Cooling Capacity Management for Data Centers

White Paper 150

Browse all white papers

whitepapers.apc.com

Browse all TradeOff Tools™

tools.apc.com

Contact us

For feedback and comments about the content of this white paper:

Data Center Science Center

dcsc@schneider-electric.com

If you are a customer and have questions specific to your data center project:

Contact your Schneider Electric representative at

www.apc.com/support/contact/index.cfm